Retency Privacy Engine draws on the vast research concerning Secure Multi-Party Computing, and in particular Private Set Intersection (PSI). The objective of this page is to provide an overview of the scientific context and earlier finding that led to the creation of Retency Privacy Engine.

Private set intersection is a secure multiparty computation cryptographic technique (1) that allows two parties holding sets to compare encrypted versions of these sets in order to compute the intersection. In this scenario, neither party reveals anything to the counterparty except for the elements in the intersection.

Introduced in 2006, Differential Privacy is a requirement for publicly sharing information about a dataset by describing certain group patterns within the dataset while withholding information about individuals in the dataset. The intuition is that a person's privacy cannot be compromised if any database query is unlikely to provide a different result, when this person is in the database or not. In other words, if S is a set of information built from personal data, it must be nearly impossible to detect if the personal data of a given individual has contributed or not to S.

Differential Privacy is not a method or an algorithm, but rather a very universal criterium to assess the degree of protection actually provided by a statistical database – and in particular to assess the risk of learning specific things about a given individual.

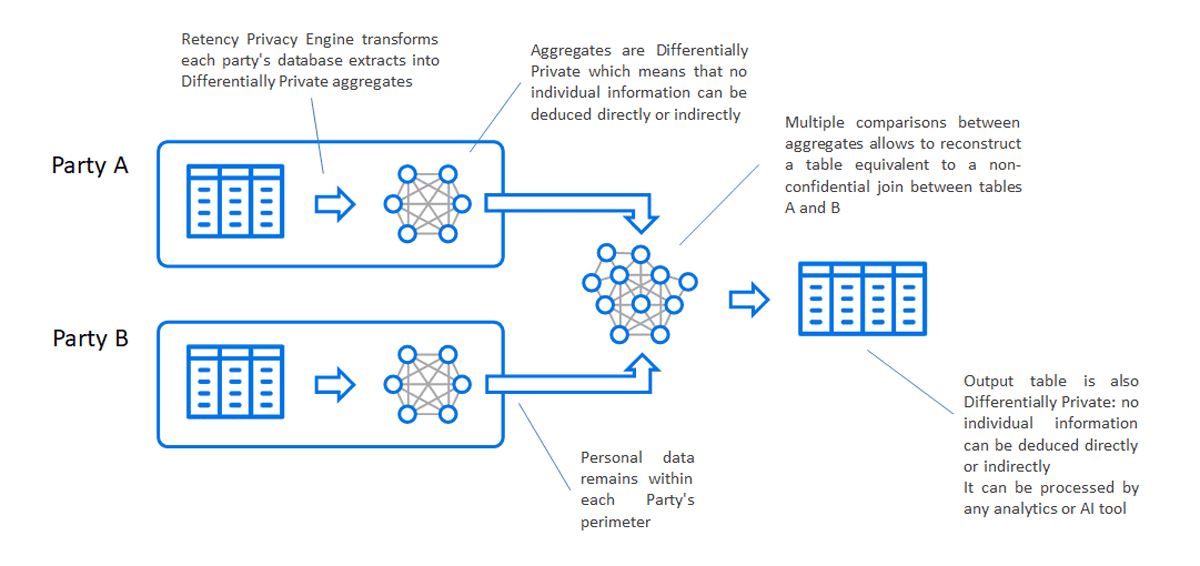

Various ways can be used to obtain a Differentially Private database. Some papers indicate that Differential Privacy can only be achieved through the direct addition of noise. Other means exist that avoid direct noise addition. The approach taken by Retency is to generate from raw data Differentially Private information sets, whose own content is subject to a statistical uncertainty of known characteristics. Therefore, the content is insensitive to the addition of a small quantity of information (and therefore insensitive to the addition a single individual information). The DP set can either not be tested to the presence (within the raw data used) of a single or a small group of individuals.

The algorithms at the core of Retency Privacy Engine are a combination of Private Set Intersection and Differential Privacy:

This two-stage approach, which is transparent to the user, brings a number of unique benefits:

The table below contains interesting background research references on Secure Multi-Party Computing and Differential Privacy.

| Title | Authors | Institution |

|---|---|---|

| Differentially Private SQL with Bounded User Contribution (2019) | Wilson, Zhang, Lam, Desfontaines, Simmons-Marengo, Gipso | |

| DJoin: Differentially Private Join Queries over Distributed Databases (2012) | Narayan, Haeberlen | University of Pennsylvania |

| Efficient Private Matching and Set Intersection (2004) | Freedman, Nissim, Pinkas | New York University, Microsoft, HP |

| Learning with Privacy at Scale (2019) | Privacy Team | Apple |

| Efficient Robust Private Set Intersection (2009) | Dachman-Soled, Malkin, Raykova, Yung | Columbia University |